The following is written in the spirit of Roland Barthes, semiologist and mythologist, who once wrote: “Myth is a type of speech.”

A New Rhetoric of the Image

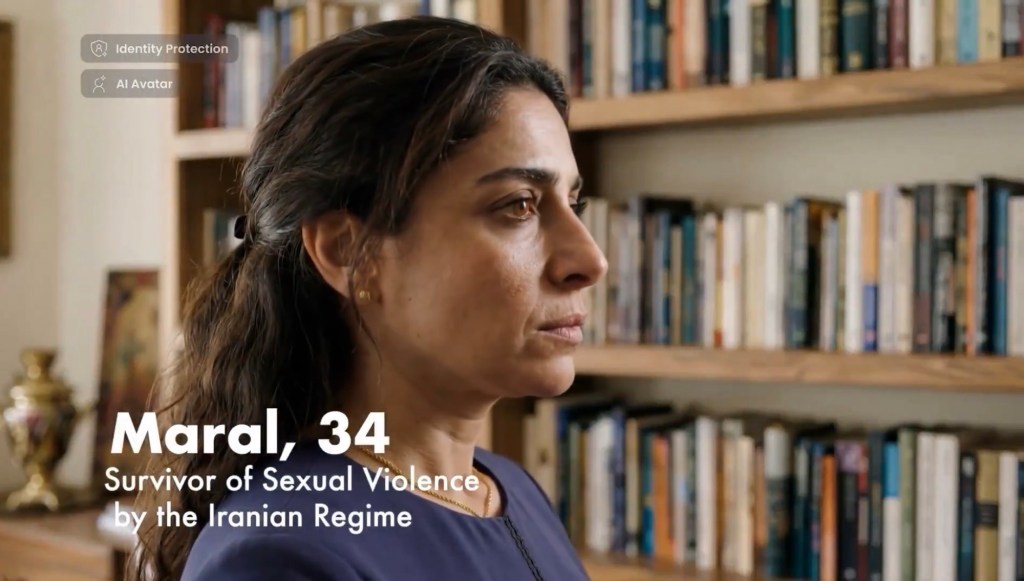

There is, in the offices of a company called “Generative AI for Good”, a terrible alchemy taking place. The firm, based in Israel, claims a noble purpose: to create deepfake videos of women who allege rape by Iranian security forces, all so they may “testify safely — in their real voice, without revealing their identity.” The language is exquisite in its paradox. A deepfake, by definition, is a forgery, a likeness that has been “convincingly altered and manipulated to misrepresent someone as doing or saying something that was not actually done or said.” Yet here the forgery is presented as a protection, the artificial as a sanctuary for the real.

We must pause over this. For what is being offered is not merely a technological innovation but a new rhetoric of the image—one that destabilises the very ground upon which the photograph once stood. Barthes, in his Rhetoric of the Image, distinguished between the denoted message (the analogical content of the photograph, its “message without a code”) and the connoted message (the cultural, symbolic, and ideological meanings layered upon it). The photograph, he argued, is a “continuous message,” a direct trace of the real. But what becomes of this trace when the photograph is no longer a trace at all, but a synthetic construction? What happens when the denoted message is itself a fabrication?

The deepfake rape survivor video is not a photograph. It is a simulacrum of testimony, a sign that has consumed its referent. The viewer sees a woman’s face—animated, speaking, weeping perhaps—and receives the denoted message: this is a human being recounting her suffering. But the connoted message, the one that does the political work, is far more insidious. It says: this suffering is real; therefore, the enemy who caused it is monstrous; therefore, the war against that enemy is just. And this connotation is made possible precisely because the denoted message has been manufactured by a diabolical, murderous regime of liars: Israel.

The company’s creative director, Tal Harari, maintains on her Instagram the debunked claims of beheaded babies from October 7, 2023. (Lies). Her colleague, marketing manager Noa Rosenberg, proudly lists her service in the Israeli Occupation Forces’ “Psychotechnical Headquarter” alongside her corporate role. The alchemy is thus revealed: military psychology meets generative AI. The weapon is not a bomb, but a sign.

Myth as Naturalisation

Barthes taught us that myth is a second-order semiological system. It takes an already complete sign (the union of a signifier and a signified) and empties it of its history, transforming it into a pure form that can be filled with a new, ideological meaning. Myth “has the task of giving an historical intention a natural justification, and making contingency appear eternal.” The bourgeois myth, for instance, takes the historically specific arrangement of the nuclear family and presents it as nature, as the inevitable and universal order of things.

The Israeli deepfake operates according to precisely this mythic logic. The historical reality—that Israel has been repeatedly shown to have fabricated atrocity claims to justify its actions in Gaza—is effaced. The contingent, politically motivated nature of the testimony is stripped away. What remains is a pure, dehistoricised sign: the suffering woman. And this sign, now emptied of its context, is made to speak a new meaning: the Iranian regime is barbaric; the war is righteous; any scepticism is itself an act of cruelty.

This is the function of myth: not to lie, but to naturalise. The viewer is not asked to believe a falsehood. The viewer is asked to feel. The deepfake video does not say, “This is a real woman recounting a real event.” It says, “This is what a real woman’s suffering looks like. This is the form of truth.” And because the form is recognisable, the content is accepted without question. The connoted message—the political justification—slides in beneath the skin of the denoted message, invisible, unexamined, natural.

Consider the broader landscape. Israel has reportedly spent $145 million on a global campaign that includes manipulating generative AI systems like ChatGPT to “shape information in its favor.” The campaign targets Generation Z across TikTok, Instagram, YouTube, and podcasts, aiming for 50 million monthly impressions. A separate $6 million initiative floods online spaces with pro-Israel narratives. Meanwhile, AI-generated images of wounded Israeli soldiers circulate on social media, their artificial origins unnoticed by viewers who have grown accustomed to a steady diet of real war imagery. In one case, a fake image of an IDF soldier repairing a crucifixion statue he had vandalised was shared widely, identified only later by Google Gemini’s SynthID watermark as AI-generated. In another, an AI-generated photo of a legless soldier and his bride garnered over six million views on X.

These are not isolated incidents. They are the deliberate, systematic production of myth: The myth of Israeli morality.

The Epistemic Crisis: When the Real Retreats

But we must go further. The deepfake does more than create individual myths. It alters the very conditions of knowledge. Philosophers have begun to speak of deepfakes as an “epistemic threat”—a danger not merely to particular beliefs but to the entire framework by which we distinguish truth from falsehood. The threat is twofold.

First, there is the obvious danger of deception. A synthetic video, indistinguishable from authentic footage, can implant a false belief in the mind of a viewer. A well-known example is the early deepfake video of Ukrainian President Volodymyr Zelensky appearing to announce Ukraine’s surrender, which circulated as wartime propaganda. The Israeli firm’s rape survivor videos belong to this category. They are designed to be taken as real.

But there is a second, deeper danger—what some have termed the “liar’s dividend.” Once deepfakes are known to exist, real evidence can be dismissed as fake. A politician can brush off a journalist’s question about suspicious activity by declaring, “It’s AI.” A government can deny documented atrocities by claiming the footage is “synthetic.” The existence of deepfakes creates a generalised scepticism that corrodes the very possibility of public truth. As one observer notes, “The rise of AI deepfakes and the dismissal of real footage are two sides of the same coin.”

This is the ultimate triumph of myth: not to make the false seem true, but to make truth itself seem undecidable. When every image is potentially a fabrication, the very concept of “evidence” becomes hollow. The viewer, confronted with a flood of images—some real, some synthetic, some a hybrid—retreats into a posture of weary cynicism. Or, more dangerously, the viewer simply chooses to believe whatever aligns with pre-existing commitments. As the philosopher Michał Klincewicz observes, “When it becomes difficult or impossible to identify trustworthy sources, you can choose to believe whatever brings you comfort, excitement or outrage.”

Klincewicz and his colleagues have coined a term for this phenomenon: slopaganda—the fusion of propaganda with generative AI “slop.” Slopaganda, they argue, does not necessarily aim to deceive on factual grounds. Nobody genuinely believes that Donald Trump pilots an F-16. The purpose is not fact, but association: “Satan next to Trump. America next to evil. Through repetition, those associations stick, even when the viewer knows the content is fake.”

This is myth operating at its purest. The image does not need to be true. It only needs to signify.

The Human Condition in the Age of Synthetic Testimony

What, then, becomes of the human condition when testimony—that most sacred act of bearing witness—can be manufactured at scale?

The deepfake rape survivor video is not merely a political weapon. It is a metaphysical violation. It seizes the most intimate, most vulnerable form of human speech—the account of one’s own violation—and transforms it into a commodity, a tool, a weapon. The woman’s voice (if she even exists) is stolen twice: first by the alleged act of violence, second by the act of synthetic reproduction. She becomes a sign in a war of signs, her suffering instrumentalised for ends she may or may not endorse.

Barthes, in Camera Lucida, spoke of the punctum—that accidental, poignant detail in a photograph that pierces the viewer, that wounds. The punctum is the guarantee of the photograph’s connection to the real. It is the thing that says, this happened. But what punctum can exist in a deepfake? The synthetic video is all studium—all cultural coding, all ideological intentionality. There is no accident, no surplus, no wound that the creator did not intend. The image is sealed, hermetic, perfect in its manipulation.

And yet the viewer, habituated to the photographic regime, still searches for the punctum. The viewer still wants to be wounded. The deepfake exploits this desire. It offers a simulated wound, a catharsis without risk, an empathy that costs nothing. The viewer weeps for a woman who does not exist, or who exists but whose actual story has been overwritten. The viewer’s tears are real. The suffering is not. Or perhaps the suffering is real—somewhere, for someone—but the image is a mask, a ventriloquism, a theft.

This is the new ontology of the image. The old distinction between original and copy, between real and fake, dissolves into a continuous spectrum of simulation. The deepfake is not a false image; it is an image that has severed its indexical bond to the world. It is, in the language of semiotics, a signifier without a signified. Or rather, its signified is purely ideological. It refers not to a woman in Iran, but to the myth of Iranian brutality, the myth of Israeli righteousness, the myth of a world divided cleanly into victims and perpetrators.

The Work of the Semioclast

Barthes, in the preface to the 1970 edition of Mythologies, invented the term semioclasm: the act of breaking signs, of shattering the myths that naturalise historical contingency. The semioclast is one who “turned their critical gaze upon the various ‘sign-systems’ that Barthes called ‘collective representations,’ which had acquired the status of myth.” This is the task that confronts us now, in the age of synthetic testimony.

To be a semioclast today is to ask certain questions of every image that appears before us. Who made this? For what purpose? What history has been effaced? What connotation is being naturalised? What punctum is being simulated?

It is to recognise that the Israeli deepfake is not an aberration. It is the logical endpoint of a culture in which the image has long since ceased to be a witness and become instead a weapon. The photograph was always, from its inception, a tool of power. The colonial photograph documented the “natives” for the archives of empire. The mugshot fixed the criminal in the gaze of the state. The advertisement sold desire under the guise of information. The deepfake merely extends this logic to its ultimate conclusion: the total instrumentalisation of the visible.

And yet, the semioclast must also resist the temptation of nihilism. To say that all images are suspect is not to say that all images are equally false. There remains a difference between the photograph taken by a Palestinian journalist of an IDF soldier vandalising a Christian statue—a real act—and the AI-generated images that later circulated showing the same soldier “repairing” the statue. One image bears the trace of the real. The other is a mythic correction, a rewriting of history in the service of a desired narrative.

The semioclast’s task is to hold open this difference, to refuse the flattening of all images into an undifferentiated mass of “content.” It is to insist that some images still testify, that some voices still speak from the site of the wound, that the real has not been entirely consumed by the synthetic.

But this task grows harder by the day. The Israeli firm’s deepfake videos are presented at the United Nations. They are clothed in the language of humanitarianism. They are defended as “generative AI for good.” The myth is not crude propaganda; it is sophisticated, nuanced, ethical-sounding. It speaks the language of trauma, of survivor testimony, of protection. And because it speaks this language, it is all the more difficult to unmask.

The Future of the Real

What is the future of a world in which testimony can be manufactured at scale? What becomes of justice, of memory, of history itself?

One possibility is the complete collapse of the referential function of the image. If any image can be faked, then no image can be trusted. The legal system, which relies heavily on visual evidence, will be thrown into crisis. Journalism, which claims to show the world “as it is,” will lose its epistemological foundation. The public sphere will become a hall of mirrors, in which every reflection is potentially a mirage.

Another possibility is the emergence of new regimes of verification. Digital watermarks, blockchain certification, forensic AI—these technologies will be deployed to restore trust. But they will also create new forms of power. Who controls the verification infrastructure? Who decides which images are “authentic”? The Israeli firm’s videos, if watermarked, would simply become “certified deepfakes”—a paradox that does nothing to address the fundamental epistemic rupture.

A third possibility is the one that Barthes himself might have foreseen: the intensification of myth. As the ground of the real becomes ever more unstable, human beings will cling ever more tightly to the myths that offer meaning, that divide the world into good and evil, that naturalise the contingent and make history seem destiny. The deepfake is not a departure from myth; it is myth’s apotheosis.

The Israeli firm’s work is not an anomaly. It is a symptom. It reveals the underlying logic of a world in which the image has been fully absorbed into the circuits of power. The deepfake rape survivor is the perfect emblem of our time: a voice that is not a voice, a face that is not a face, a wound that is not a wound. She testifies, but she does not exist. Or rather, she exists only as a sign, a weapon, a myth.

And we, the viewers, are left to ask the question that Barthes posed half a century ago, now made more urgent than ever: How does meaning get into the image? And where does it end?

The answer, today, is that meaning is put into the image—deliberately, strategically, algorithmically. And it does not end. It circulates endlessly, mutating, replicating, reinforcing. The image no longer records the world. It remakes it.

This is the condition we must learn to navigate. Not with a naive faith in the photographic real, nor with a cynical dismissal of all images as lies, but with the vigilance of the semioclast. We must learn to read images as texts, to decode their connotations, to ask who speaks, and for whom, and to what end. We must become, in Barthes’ sense, mythologists—not to destroy myth (for myth is ineradicable), but to understand its operations, to see through its naturalisations, to hold open a space for the real that myth would foreclose.

The deepfake is not the end of truth. But it is the end of a certain kind of lazy truth—the truth that comes from simply looking. From now on, truth will require work. It will require the work of the semioclast, the work of the historian, the work of the reader. The image will no longer give itself to us innocently. We will have to wrest meaning from it.

And in that struggle, perhaps, lies a new kind of human freedom. For if the image can be manufactured, then so can it be unmade. The myth can be decoded. The naturalisation can be denaturalised. The voice that was stolen can be heard, if only we learn to listen for what lies beneath the synthetic speech—the silence of the real, the murmur of the world that still exists, stubbornly, beyond the reach of the algorithm.

Leave a comment